Xcode 6 and iOS 8 has been released together with a new way of beta testing iOS applications. This is an introduction and a step-by-step guide for setting up an iTunes Connect and TestFlight Beta Test with internal testers.

After months of beta testing iOS 8 myself, I’ve been waiting for this day to come, the day when Apple will add scalability and a new dimension to beta testing of your iOS applications. Before this day there’s been a lot of speculating and predicting of what will happen around beta testing. We mainly know the answers that we, the team at Beta Family and every iOS developer, were looking for. With Xcode 6 and iOS 8, which are the requirements for this service, there’s a whole new possibility available when it comes to beta testing your application. Through iTunes Connect you will manage your testers and TestFlight will make your app available for your testers. Xcode 6 and iOS 8 are available for developers inside the iOS Dev Center. Developers will no longer be held back because of UDIDs when beta testing, instead they will handle testers’ Apple IDs.

In the beginning you’ll only be able to beta test your app on 25 internal testers. But soon, 1000 external testers, which are not a part of you development organization will also be available when beta testing. When deploying an app to external testers the app has to go through a Beta App Review and must follow the App Store Review Guidelines before the beta testing can start. A review will be required for every new version that contains significant changes. You’ll be able to test 10 apps at the same time. Read more about it here.

All this is a big advancement for Apple in beta testing and it’s a welcome change for both developers and Beta Family. We, Beta Family, will make several changes and updates to our service. First we’ll organize testers’ Apple IDs for easy distribution. We’ll also add options for developers to choose when they want to test a beta version through iTunes Connect and whether they want to test it on internal or external testers.

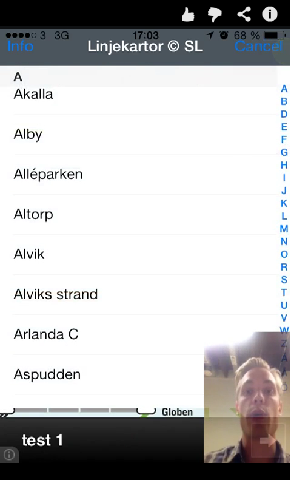

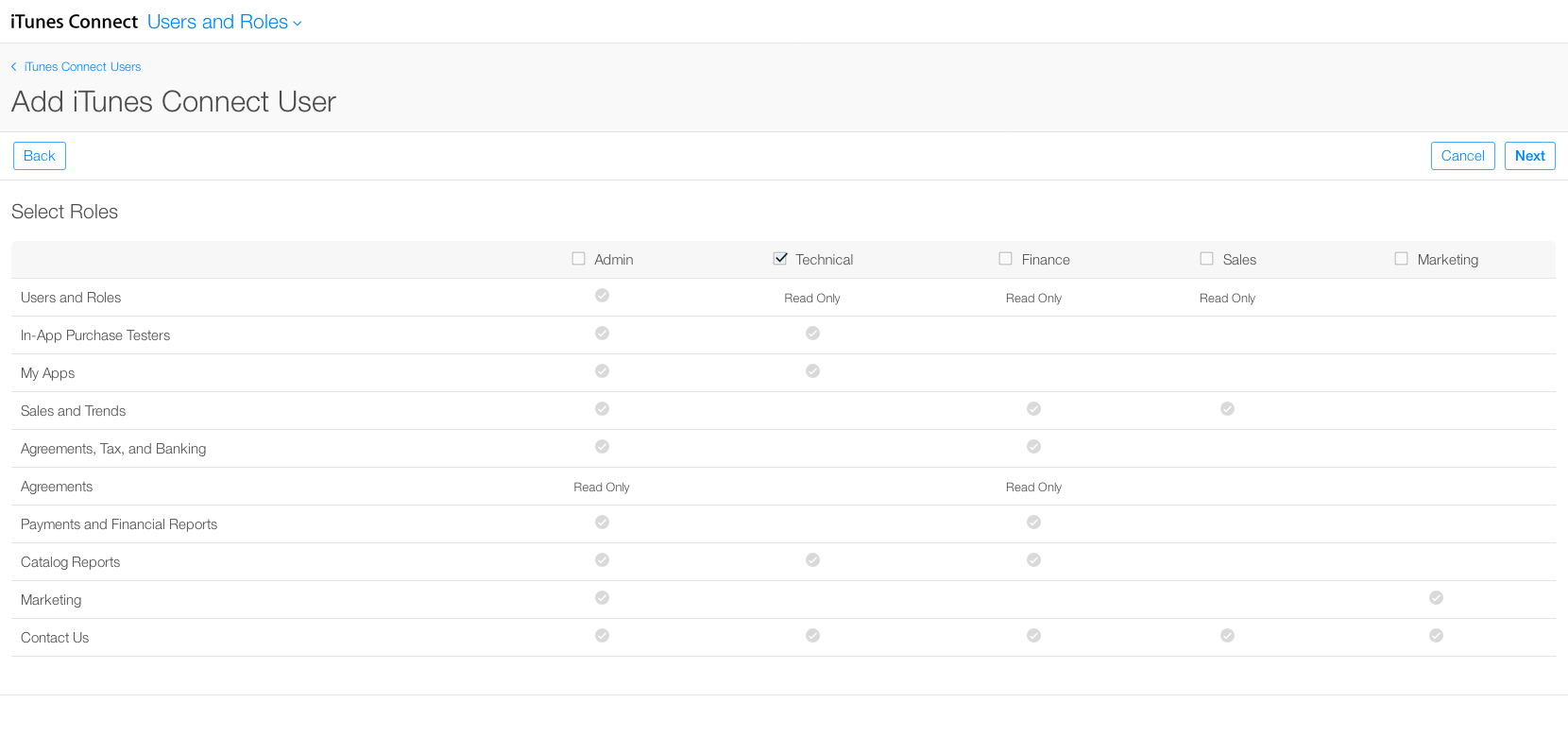

1. Sign in to iTunes Connect and add iTunes Connect users under “Users and Roles”. When you’ve entered the details for the user you’ll be able to choose the user’s role. “Technical” (Read Only) is the one to prefer if you don’t want to give the user access to maintaining your available apps.

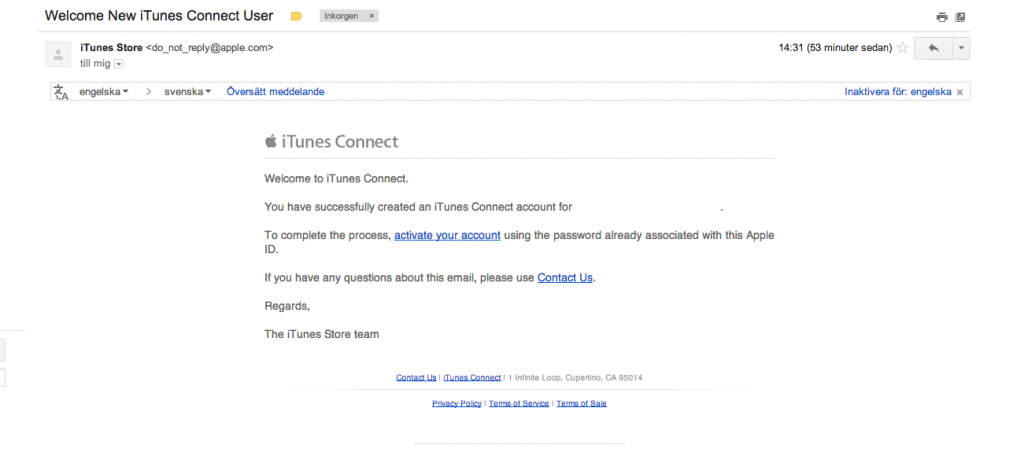

The users you’re adding will receive an e-mail. You can add and delete users whenever and however you want. Every user will have to verify their e-mail.

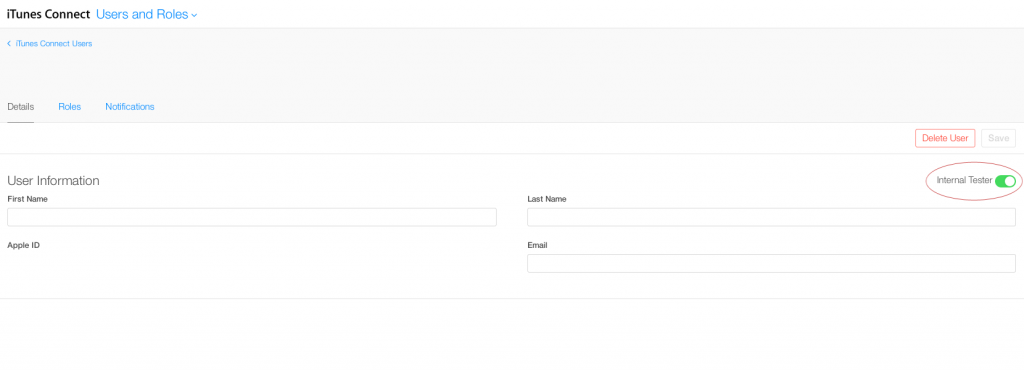

invitation mailWhen the users have verified their e-mail you’ll find them under “Users and Roles”. Click on the e-mail for the user and turn on the switch “Internal Tester”.

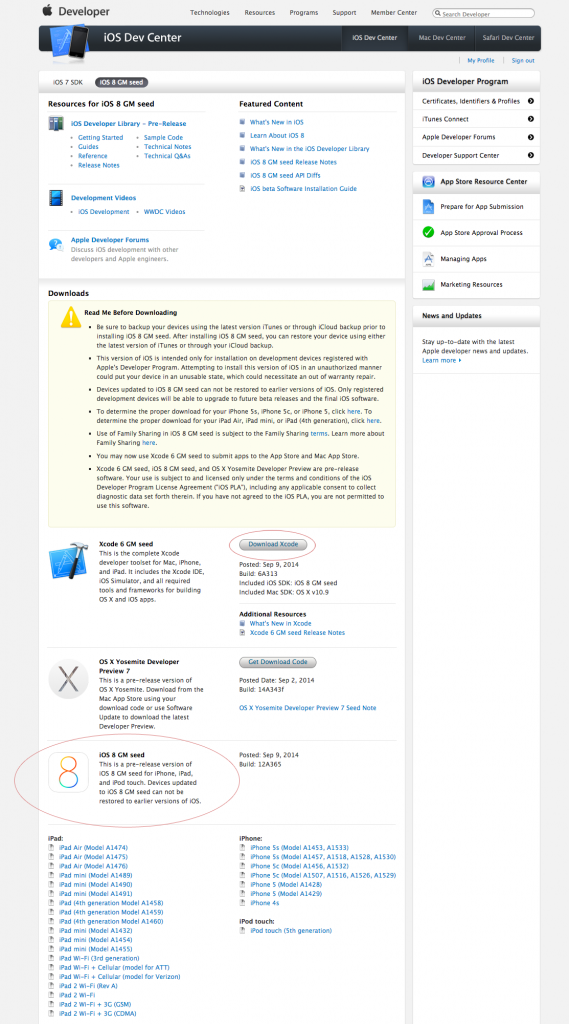

2. Install Xcode 6 from the iOS Dev Center. If you want to keep previous versions of Xcode you can create a folder inside Applications and move your existing Xcode version to that folder.

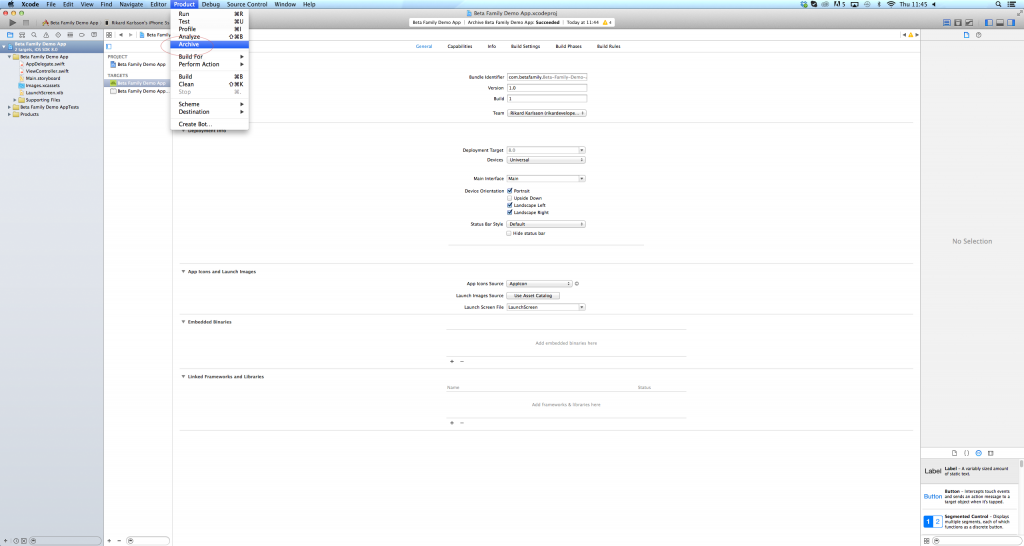

3. Archive your project, choose Product > Archive.

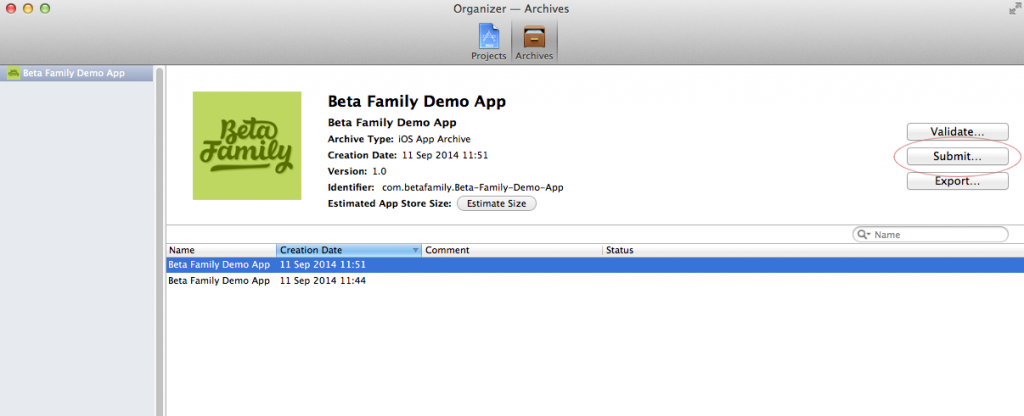

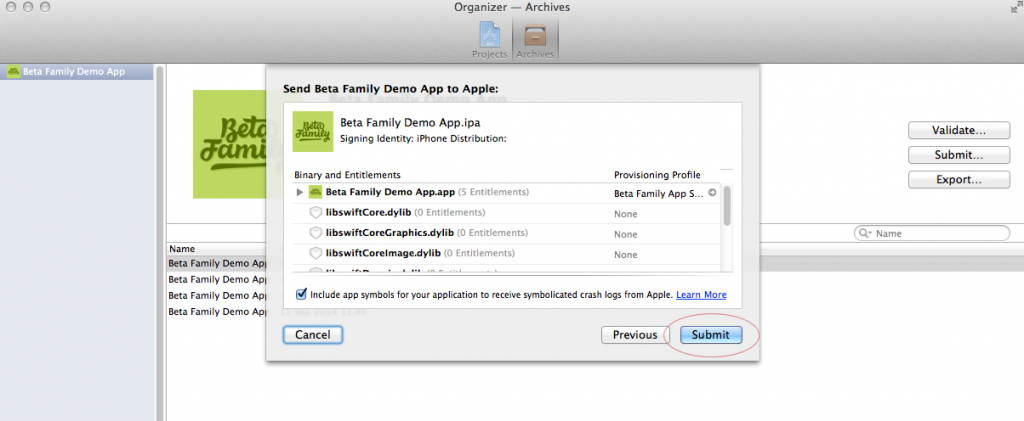

Now click on the “Submit…” button.

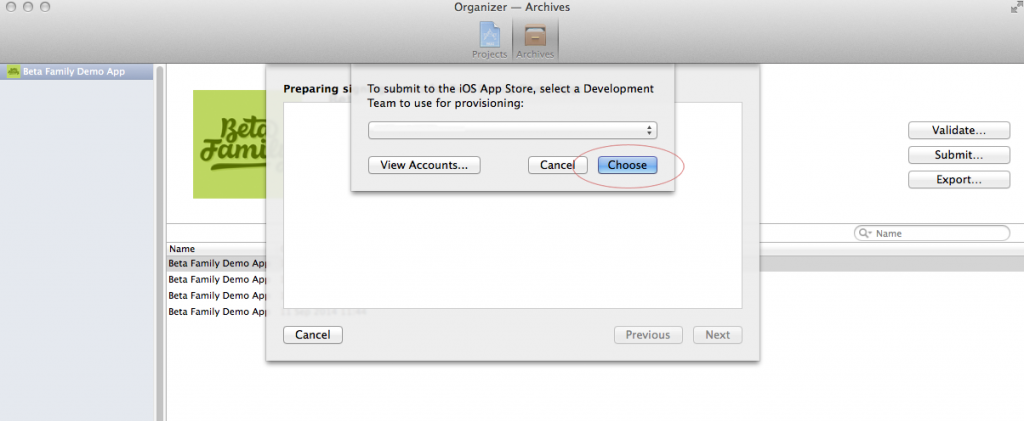

Choose your Development Team to use for provisioning.

Submit your app.

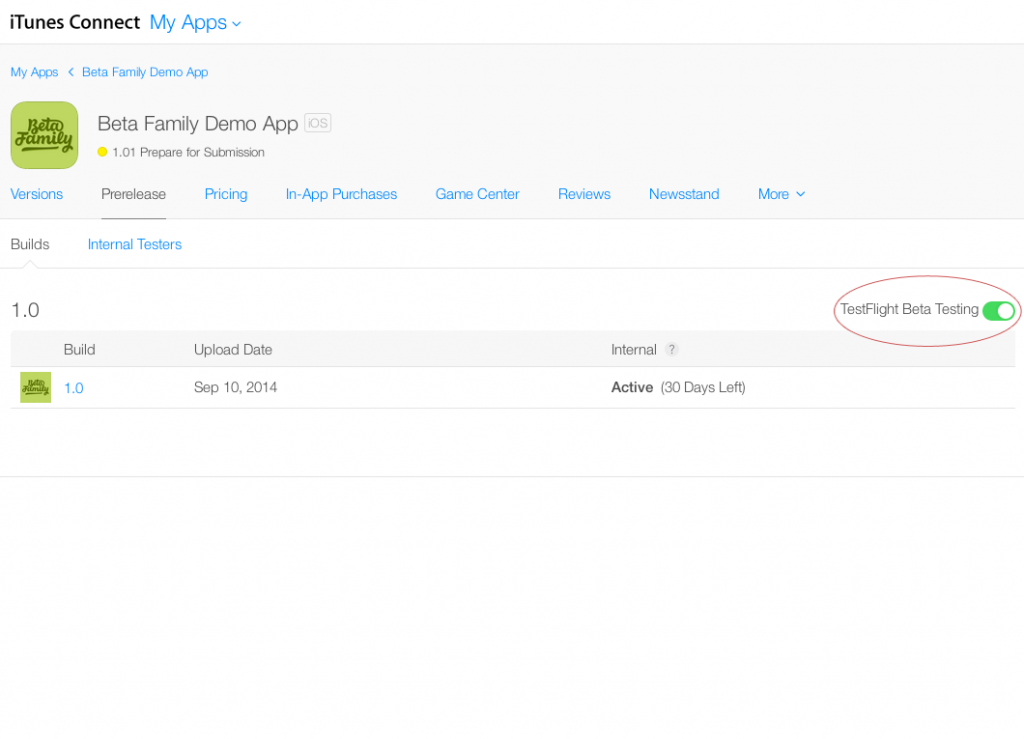

4. In your iTunes Connect account you can now browse to your app, you’ll find it under “My Apps”. Go to “Prerelease”, here you’ll find your uploaded build. Enable beta testing for this build by turning on the switch on the right hand side of the screen.

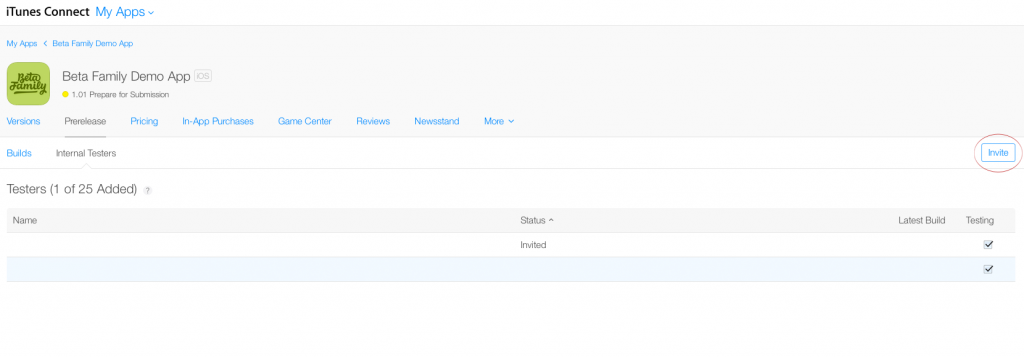

5. Inside “Prerelease” there’s a submenu containing “Builds” and “Internal Testers”. Now that you’ve enabled TestFlight beta testing you can invite testers under “Internal Testers”. Checkmark the testers you want to invite and click the “Invite” button.

5. Inside “Prerelease” there’s a submenu containing “Builds” and “Internal Testers”. Now that you’ve enabled TestFlight beta testing you can invite testers under “Internal Testers”. Checkmark the testers you want to invite and click the “Invite” button.

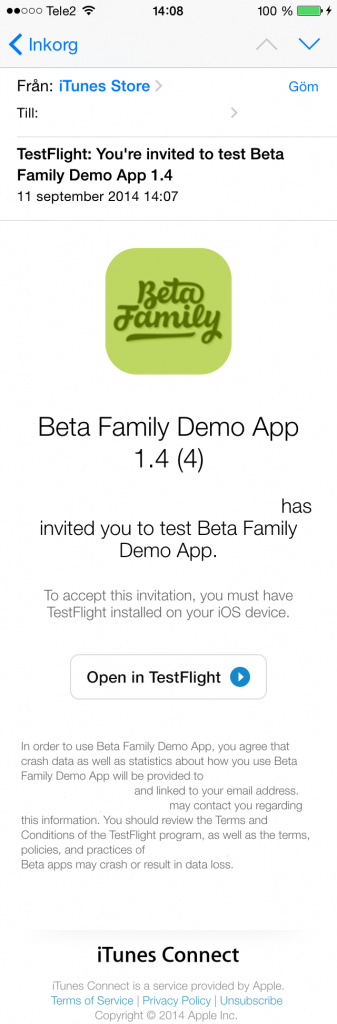

Testers will receive an e-mail with an invite.

Testers will receive an e-mail with an invite.

When the testers click “Open in TestFlight” they will be redirected to the TestFlight app and asked to install the app.

Testing is now under way and a orange dot will appear next to the beta version of the app.

Conclusion

We, Beta Family, will still provide developers with our awesome community of testers, but now without the trouble involving UDIDs. Tester management will now be easier with Apple IDs which Beta Family, of course, will provide for you. Also their will be zero file handling and this will without doubt speed up the testing process since the upload and adding users can be managed instantly.

Note: Of course you will be able to use the system just like before.

The hospital St Mary’s Mercy in Michigan, USA wrongfully informed the authorities and Social Security that 8, 500 of their patients had passed away between October 25 and December 11 of 2002. A spokeswoman for the hospital explained that an event code in the patient-management software had been wrongfully mapped: the code 20 for expired was used instead of 01 for discharged. Needless to say, a plethora of legal, billing and insurance issues followed the incident.

The hospital St Mary’s Mercy in Michigan, USA wrongfully informed the authorities and Social Security that 8, 500 of their patients had passed away between October 25 and December 11 of 2002. A spokeswoman for the hospital explained that an event code in the patient-management software had been wrongfully mapped: the code 20 for expired was used instead of 01 for discharged. Needless to say, a plethora of legal, billing and insurance issues followed the incident. The state of California had been asked in 2011 to reduce its prison population by 33, 000, with preference to non-violent offenders. Again, a mapping error occurred, reversing the preference criteria and instead giving non-revocable preference to approximately 450 violent inmates, exempting them from having to report to parole officers after their release.

The state of California had been asked in 2011 to reduce its prison population by 33, 000, with preference to non-violent offenders. Again, a mapping error occurred, reversing the preference criteria and instead giving non-revocable preference to approximately 450 violent inmates, exempting them from having to report to parole officers after their release. The market-making and trading firm Knight Capital Group were using a trading algorithm that during a single 30-minute period inexplicably decided to oppose sound economic strategy by instead buying high and its stock dropped 62 percent in just one day. The company would not describe the issue in detail but referred to it as a “large bug, infecting its market-making software”.

The market-making and trading firm Knight Capital Group were using a trading algorithm that during a single 30-minute period inexplicably decided to oppose sound economic strategy by instead buying high and its stock dropped 62 percent in just one day. The company would not describe the issue in detail but referred to it as a “large bug, infecting its market-making software”. NASA launched the Mars Climate Orbiter by the end of 1998, but due to an error in the ground-based software, the Orbiter went missing in action after 286 days. The orbit had been incorrectly calculated, in large part thanks to different programming teams using different units. As a result, the thrust was almost 4.5 times more powerful than intended, leading to the wrong entry point into the Mars atmosphere. The $327 million Orbiter was disintegrated into pieces.

NASA launched the Mars Climate Orbiter by the end of 1998, but due to an error in the ground-based software, the Orbiter went missing in action after 286 days. The orbit had been incorrectly calculated, in large part thanks to different programming teams using different units. As a result, the thrust was almost 4.5 times more powerful than intended, leading to the wrong entry point into the Mars atmosphere. The $327 million Orbiter was disintegrated into pieces. During the Gulf War and Operation Desert Storm in 1991, the Patriot Missile System failed to track and intercept a Scud missile aimed at an American base. The software had a timing issue which caused a sensor delay that would continue to grow until system reboot, with detection accuracy loss after approximately 8 hours. On the date of this incident, the system had been continuously operating for more than 100 hours, resulting in such a big a delay that the software was actually looking for the missile in the wrong place. The Scud missile hit the American barracks leading to 28 dead and over a 100 injured.

During the Gulf War and Operation Desert Storm in 1991, the Patriot Missile System failed to track and intercept a Scud missile aimed at an American base. The software had a timing issue which caused a sensor delay that would continue to grow until system reboot, with detection accuracy loss after approximately 8 hours. On the date of this incident, the system had been continuously operating for more than 100 hours, resulting in such a big a delay that the software was actually looking for the missile in the wrong place. The Scud missile hit the American barracks leading to 28 dead and over a 100 injured. During the mid-80’s, a medical radiation therapy device called the Therac-25 was used in hospitals. The machine could operate in two modes: low-power electron beam or X-ray, which was much more powerful and only supposed to function if a metal target was placed between the device and the patient. The previous model, Therac-20, had an electromechanical physical safety to ensure that this metal target was in place, but it was decided that a software lock would replace it for the Therac-25. Due to a particular kind of bug called a “race condition” it was possible for a device operator typing fast to bypass the software check and mistakenly administer lethal radiation doses to the patient. The issue resulted in at least five deaths.

During the mid-80’s, a medical radiation therapy device called the Therac-25 was used in hospitals. The machine could operate in two modes: low-power electron beam or X-ray, which was much more powerful and only supposed to function if a metal target was placed between the device and the patient. The previous model, Therac-20, had an electromechanical physical safety to ensure that this metal target was in place, but it was decided that a software lock would replace it for the Therac-25. Due to a particular kind of bug called a “race condition” it was possible for a device operator typing fast to bypass the software check and mistakenly administer lethal radiation doses to the patient. The issue resulted in at least five deaths. US long-distance callers using the operator AT&T on January 15th, 1990 found that no calls were going through. The long-distance switch network was infinitely rebooting for nine hours, first leading the company to believe they were being hacked. The issue turned out to be software related, in which a timing flaw lead to cascading errors.

US long-distance callers using the operator AT&T on January 15th, 1990 found that no calls were going through. The long-distance switch network was infinitely rebooting for nine hours, first leading the company to believe they were being hacked. The issue turned out to be software related, in which a timing flaw lead to cascading errors.